def pose_to_matrix(x, y, theta):

"""Convert pose (x, y, theta) to SE(2) matrix."""

c, s = np.cos(theta), np.sin(theta)

return np.array([[c, -s, x], [s, c, y], [0, 0, 1]])

def matrix_to_pose(T):

"""Extract (x, y, theta) from SE(2) matrix."""

return T[0, 2], T[1, 2], np.arctan2(T[1, 0], T[0, 0])

def compute_residual(xi, xj, z_ij):

"""

Compute residual for edge (i, j).

xi, xj: poses (x, y, theta)

z_ij: measured relative pose (dx, dy, dtheta)

Returns: residual vector [ex, ey, etheta]

Residual: e = [R_i^T @ (p_j - p_i); theta_j - theta_i] - z_ij

"""

x_i, y_i, theta_i = xi[0], xi[1], xi[2]

x_j, y_j, theta_j = xj[0], xj[1], xj[2]

dx_meas, dy_meas, dtheta_meas = z_ij[0], z_ij[1], z_ij[2]

c, s = np.cos(theta_i), np.sin(theta_i)

# Predicted relative position (j in frame i)

dx_world = x_j - x_i

dy_world = y_j - y_i

dx_pred = c * dx_world + s * dy_world

dy_pred = -s * dx_world + c * dy_world

dtheta_pred = theta_j - theta_i

# Residual = predicted - measured

e = np.array([

dx_pred - dx_meas,

dy_pred - dy_meas,

np.arctan2(np.sin(dtheta_pred - dtheta_meas),

np.cos(dtheta_pred - dtheta_meas)) # Wrap angle

])

return ePose Graph Optimization

Correcting Odometry Drift with Loop Closures

Instructor: Hasan A. Poonawala

Mechanical and Aerospace Engineering

University of Kentucky, Lexington, KY, USA

Topics:

Odometry Drift Problem

Graph Structure

Nonlinear Least Squares Formulation

Lesson Overview

- Problem: Odometry drift accumulates over time

- Solution: Add loop closure constraints and optimize

- Formulation: Pose graph as nonlinear least squares

- Implementation: Gauss-Newton on sparse graphs

Paper: LOAM

Paper: Fast-LIO2

https://www.youtube.com/watch?v=uVcBa6NXAbk

Part 1: The Drift Problem

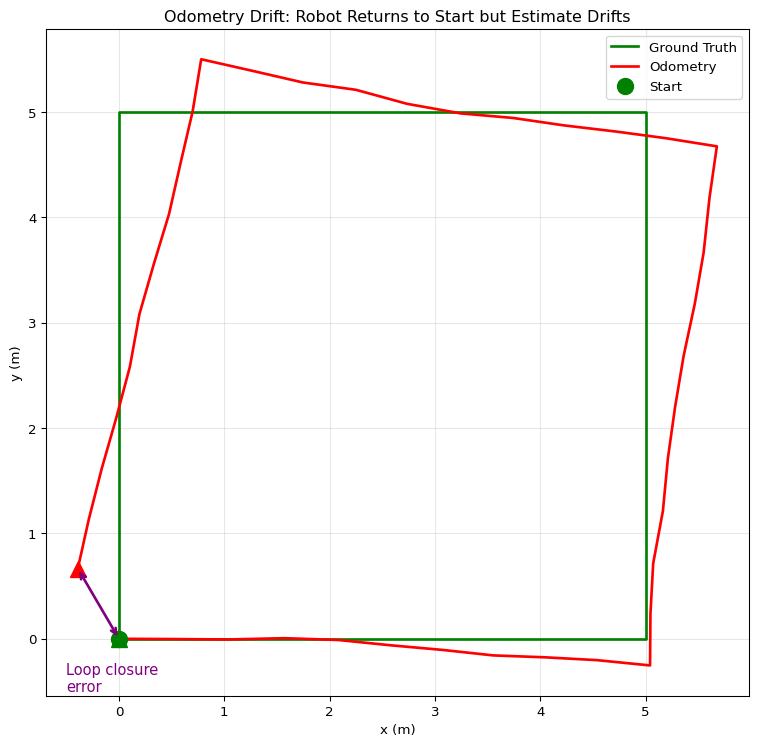

Recall: Odometry Drift

From scan matching or wheel odometry, we chain relative poses:

\[ T_{B_{k+1}}^{W} = T_{B_k}^{W} \cdot T_{B_{k+1}}^{B_k} \]

Each relative measurement \(T_{B_{k+1}}^{B_k}\) has small errors that accumulate.

Drift Visualization

Loop Closure

When the robot revisits a location, we can detect this by matching scans.

This gives us a constraint between non-sequential poses!

Loop Closure

A loop closure is a measurement that relates poses far apart in time but close in space.

What Loop Closure Tells Us

If scan matching at time \(k\) finds a good match with the scan from time \(j\) (where \(j \ll k\)):

We obtain an estimate of \(T_{B_k}^{B_j}\) — the relative pose between poses \(j\) and \(k\).

Problem: This measurement contradicts our odometry chain!

- Odometry says: \(T_{B_k}^{B_j} = T_{B_{j+1}}^{B_j} \cdot T_{B_{j+2}}^{B_{j+1}} \cdots T_{B_k}^{B_{k-1}}\)

- Loop closure says: \(T_{B_k}^{B_j} \approx \tilde{T}_{B_k}^{B_j}\) (from scan matching)

The Optimization Problem

We want to find poses that best satisfy all constraints:

- Sequential constraints from odometry

- Loop closure constraints from scan matching

This is a nonlinear least squares problem!

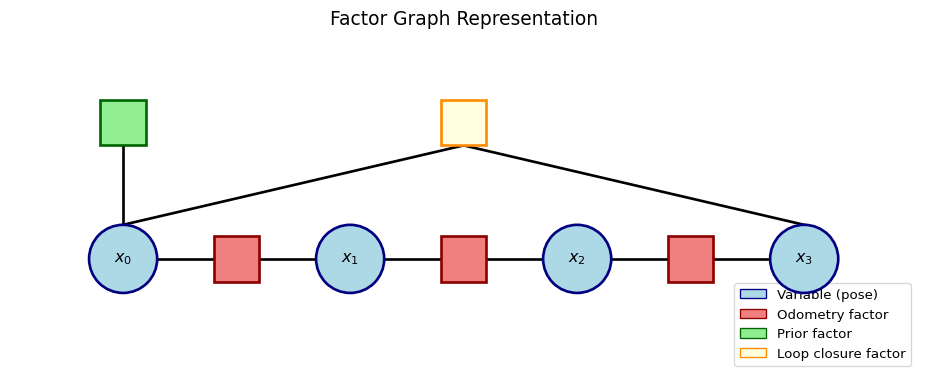

Part 2: Graph Structure

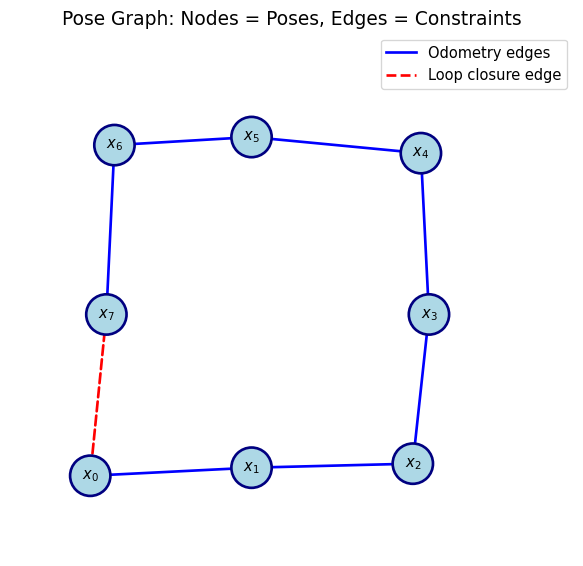

Pose Graph Representation

Graph Components

Nodes \(\mathcal{V} = \{x_0, x_1, \ldots, x_n\}\)

- Each node is a robot pose: \(x_i = (x_i, y_i, \theta_i) \in SE(2)\)

Edges \(\mathcal{E} = \{(i, j)\}\)

- Each edge represents a relative pose measurement \(\tilde{T}_{ij}\)

- Sequential edges: \((0,1), (1,2), \ldots\) from odometry

- Loop closure edges: \((j, k)\) where \(k \gg j\), from scan matching

Edge Measurement

An edge \((i, j)\) stores:

- Measured relative pose: \(\tilde{T}_{ij} = (\tilde{t}_x, \tilde{t}_y, \tilde{\theta})\)

- “Pose \(j\) as seen from pose \(i\)”

- Information matrix (optional): \(\Omega_{ij}\)

- Encodes measurement confidence

- More confident measurements have larger weight

Part 3: Least Squares Formulation

Residual for an Edge

Given estimated poses \(x_i = (x_i, y_i, \theta_i)\) and \(x_j = (x_j, y_j, \theta_j)\):

Predicted relative pose: \[ \hat{T}_{ij} = T_i^{-1} \circ T_j \]

Residual: Difference between predicted and measured \[ e_{ij} = \hat{T}_{ij} \ominus \tilde{T}_{ij} \]

SE(2) Residual Computation

In 2D, the residual has three components:

Cost Function

The total cost is the sum of squared residuals over all edges:

\[ F(x) = \sum_{(i,j) \in \mathcal{E}} e_{ij}^T \Omega_{ij} \, e_{ij} \]

where:

- \(e_{ij} \in \mathbb{R}^3\) is the residual for edge \((i, j)\)

- \(\Omega_{ij} \in \mathbb{R}^{3 \times 3}\) is the information matrix (often \(I_3\))

Note

This is exactly the nonlinear least squares problem from earlier lectures!

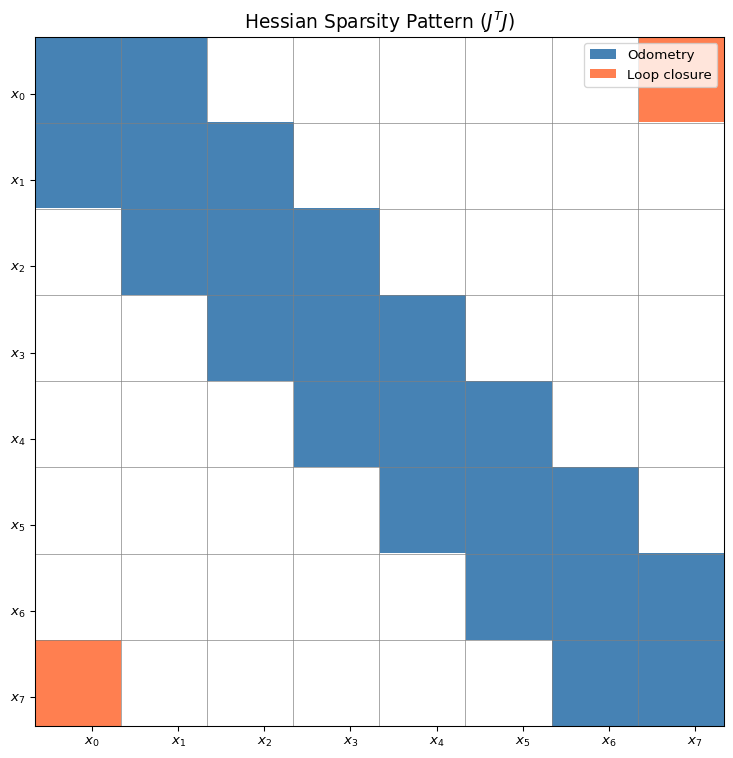

Jacobian Structure

The residual \(e_{ij}\) depends only on poses \(x_i\) and \(x_j\).

Jacobians: \[ A_{ij} = \frac{\partial e_{ij}}{\partial x_i} \in \mathbb{R}^{3 \times 3}, \quad B_{ij} = \frac{\partial e_{ij}}{\partial x_j} \in \mathbb{R}^{3 \times 3} \]

The full Jacobian \(J\) is sparse: most entries are zero!

Sparsity Pattern

Tip

Sparsity is what makes large-scale SLAM tractable!

Jacobian for SE(2) Edge

For residual \(e_{ij}\) with poses \(x_i\) and \(x_j\):

def compute_jacobians(xi, xj, z_ij):

"""

Compute Jacobians of residual w.r.t. poses xi and xj.

Returns: A (de/dxi), B (de/dxj), both 3x3

"""

theta_i = xi[2]

c, s = np.cos(theta_i), np.sin(theta_i)

dx = xj[0] - xi[0]

dy = xj[1] - xi[1]

# Jacobian w.r.t. xi

A = np.array([

[-c, -s, -s * dx + c * dy],

[ s, -c, -c * dx - s * dy],

[ 0, 0, -1]

])

# Jacobian w.r.t. xj

B = np.array([

[ c, s, 0],

[-s, c, 0],

[ 0, 0, 1]

])

return A, BGauss-Newton Update

Stack all poses into vector \(\mathbf{x} \in \mathbb{R}^{3n}\):

\[ \mathbf{x} = \begin{bmatrix} x_0 \\ y_0 \\ \theta_0 \\ x_1 \\ y_1 \\ \theta_1 \\ \vdots \end{bmatrix} \]

Gauss-Newton iteration: \[ H \, \Delta \mathbf{x} = -\mathbf{b} \] where \(H = J^T \Omega J\) (sparse!) and \(\mathbf{b} = J^T \Omega \, \mathbf{e}\)

Building the Linear System

def build_linear_system(poses, edges, measurements):

"""

Build H and b for Gauss-Newton.

poses: list of (x, y, theta)

edges: list of (i, j) pairs

measurements: list of (dx, dy, dtheta) for each edge

"""

n = len(poses)

H = np.zeros((3*n, 3*n))

b = np.zeros(3*n)

for (i, j), z_ij in zip(edges, measurements):

xi, xj = poses[i], poses[j]

e_ij = compute_residual(xi, xj, z_ij)

A, B = compute_jacobians(xi, xj, z_ij)

# Add to H (using Omega = I)

H[3*i:3*i+3, 3*i:3*i+3] += A.T @ A

H[3*i:3*i+3, 3*j:3*j+3] += A.T @ B

H[3*j:3*j+3, 3*i:3*i+3] += B.T @ A

H[3*j:3*j+3, 3*j:3*j+3] += B.T @ B

# Add to b

b[3*i:3*i+3] += A.T @ e_ij

b[3*j:3*j+3] += B.T @ e_ij

return H, bFixing the Gauge

The cost function is invariant to rigid transformations of all poses.

Problem: \(H\) is rank-deficient (3 degrees of freedom)

Solution: Fix the first pose \(x_0 = (0, 0, 0)\)

- Remove rows/columns for \(x_0\) from \(H\)

- Or add large diagonal entries: \(H_{0:3, 0:3} += \lambda I\)

Part 4: Implementation

Full Optimization Loop

def optimize_pose_graph(initial_poses, edges, measurements,

n_iter=10, fix_first=True):

"""

Optimize pose graph using Gauss-Newton.

"""

poses = [np.array(p) for p in initial_poses]

n = len(poses)

for iteration in range(n_iter):

H, b = build_linear_system(poses, edges, measurements)

# Fix first pose (add large weight)

if fix_first:

H[0:3, 0:3] += 1e6 * np.eye(3)

# Solve

dx = np.linalg.solve(H, -b)

# Update poses

for i in range(n):

poses[i] = poses[i] + dx[3*i:3*i+3]

# Normalize angle

poses[i][2] = np.arctan2(np.sin(poses[i][2]),

np.cos(poses[i][2]))

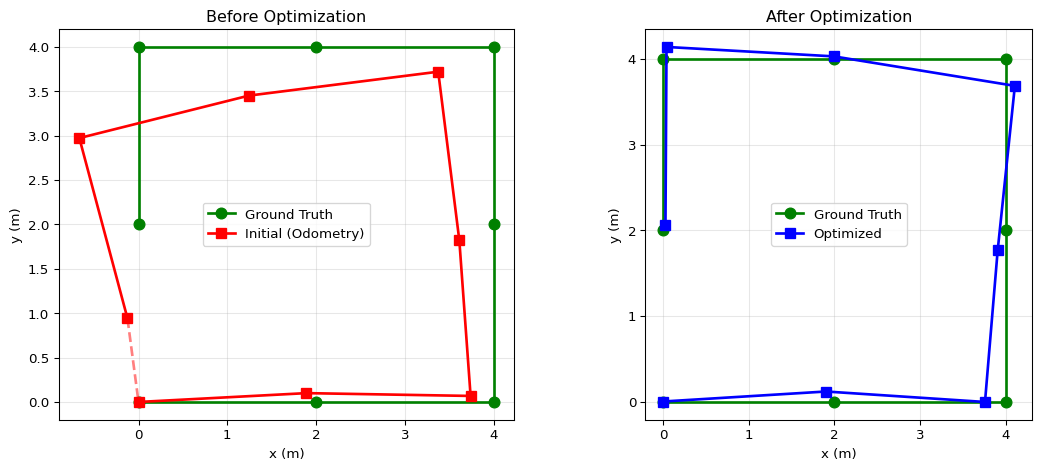

return posesExample: Square Loop

Code

np.random.seed(123)

# True poses: square path

true_poses = [

(0, 0, 0),

(2, 0, 0),

(4, 0, np.pi/2),

(4, 2, np.pi/2),

(4, 4, np.pi),

(2, 4, np.pi),

(0, 4, -np.pi/2),

(0, 2, -np.pi/2),

]

# Generate noisy odometry measurements

edges = [(i, i+1) for i in range(7)] # Sequential

edges.append((7, 0)) # Loop closure!

measurements = []

for i, j in edges:

Ti = pose_to_matrix(*true_poses[i])

Tj = pose_to_matrix(*true_poses[j])

T_rel = np.linalg.inv(Ti) @ Tj

z = matrix_to_pose(T_rel)

# Add noise (more noise on odometry, less on loop closure)

if j == i + 1: # Odometry

noise = np.array([0.1, 0.1, 0.05]) * np.random.randn(3)

else: # Loop closure (often more accurate)

noise = np.array([0.05, 0.05, 0.02]) * np.random.randn(3)

measurements.append(tuple(np.array(z) + noise))

# Initial poses from chaining odometry (with drift)

initial_poses = [np.array([0.0, 0.0, 0.0])]

T_acc = np.eye(3)

for i in range(7):

T_rel = pose_to_matrix(*measurements[i])

T_acc = T_acc @ T_rel

initial_poses.append(np.array(matrix_to_pose(T_acc)))

# Optimize

optimized_poses = optimize_pose_graph(initial_poses, edges, measurements, n_iter=20)

# Plot comparison

fig, axes = plt.subplots(1, 2, figsize=(12, 5))

# Before optimization

ax = axes[0]

init_arr = np.array(initial_poses)

true_arr = np.array(true_poses)

ax.plot(true_arr[:, 0], true_arr[:, 1], 'g-o', markersize=8, linewidth=2, label='Ground Truth')

ax.plot(init_arr[:, 0], init_arr[:, 1], 'r-s', markersize=8, linewidth=2, label='Initial (Odometry)')

# Draw loop closure gap

ax.plot([init_arr[-1, 0], init_arr[0, 0]], [init_arr[-1, 1], init_arr[0, 1]],

'r--', linewidth=2, alpha=0.5)

ax.set_xlabel('x (m)')

ax.set_ylabel('y (m)')

ax.set_aspect('equal')

ax.grid(True, alpha=0.3)

ax.legend()

ax.set_title('Before Optimization')

# After optimization

ax = axes[1]

opt_arr = np.array(optimized_poses)

ax.plot(true_arr[:, 0], true_arr[:, 1], 'g-o', markersize=8, linewidth=2, label='Ground Truth')

ax.plot(opt_arr[:, 0], opt_arr[:, 1], 'b-s', markersize=8, linewidth=2, label='Optimized')

ax.set_xlabel('x (m)')

ax.set_ylabel('y (m)')

ax.set_aspect('equal')

ax.grid(True, alpha=0.3)

ax.legend()

ax.set_title('After Optimization')

plt.tight_layout()

plt.show()

# Print errors

init_error = np.mean([np.linalg.norm(init_arr[i, :2] - true_arr[i, :2]) for i in range(8)])

opt_error = np.mean([np.linalg.norm(opt_arr[i, :2] - true_arr[i, :2]) for i in range(8)])

print(f"Mean position error - Initial: {init_error:.3f} m, Optimized: {opt_error:.3f} m")Mean position error - Initial: 0.593 m, Optimized: 0.152 m

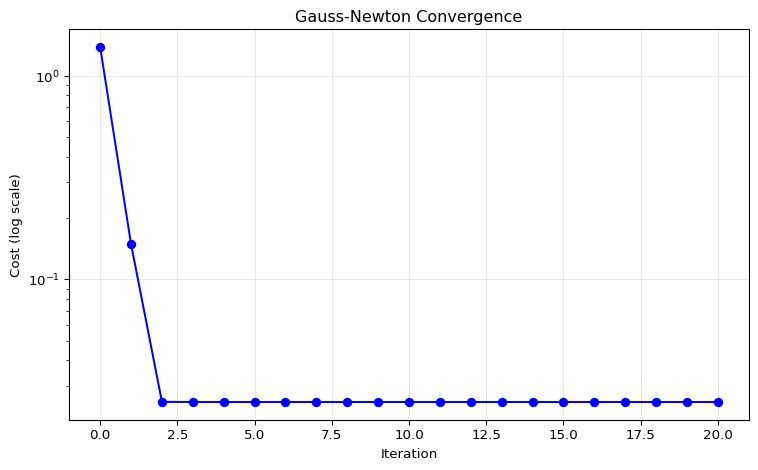

Convergence

Code

# Track cost over iterations

def compute_total_cost(poses, edges, measurements):

cost = 0

for (i, j), z_ij in zip(edges, measurements):

e = compute_residual(poses[i], poses[j], z_ij)

cost += e @ e

return cost

# Re-run optimization tracking cost

poses = [np.array(p) for p in initial_poses]

costs = [compute_total_cost(poses, edges, measurements)]

for iteration in range(20):

H, b = build_linear_system(poses, edges, measurements)

H[0:3, 0:3] += 1e6 * np.eye(3)

dx = np.linalg.solve(H, -b)

for i in range(len(poses)):

poses[i] = poses[i] + dx[3*i:3*i+3]

poses[i][2] = np.arctan2(np.sin(poses[i][2]), np.cos(poses[i][2]))

costs.append(compute_total_cost(poses, edges, measurements))

fig, ax = plt.subplots(figsize=(8, 5))

ax.semilogy(costs, 'b-o', markersize=6)

ax.set_xlabel('Iteration')

ax.set_ylabel('Cost (log scale)')

ax.set_title('Gauss-Newton Convergence')

ax.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

Note

Gauss-Newton converges quickly — typically 5-10 iterations suffice.

Part 5: Practical Considerations

Weighted Edges

Different measurements have different uncertainties.

Information matrix \(\Omega_{ij}\):

- Diagonal: \(\Omega = \text{diag}(\sigma_x^{-2}, \sigma_y^{-2}, \sigma_\theta^{-2})\)

- More confident measurements → larger \(\Omega\) → more influence

Cost function becomes: \[ F(x) = \sum_{(i,j) \in \mathcal{E}} e_{ij}^T \Omega_{ij} \, e_{ij} \]

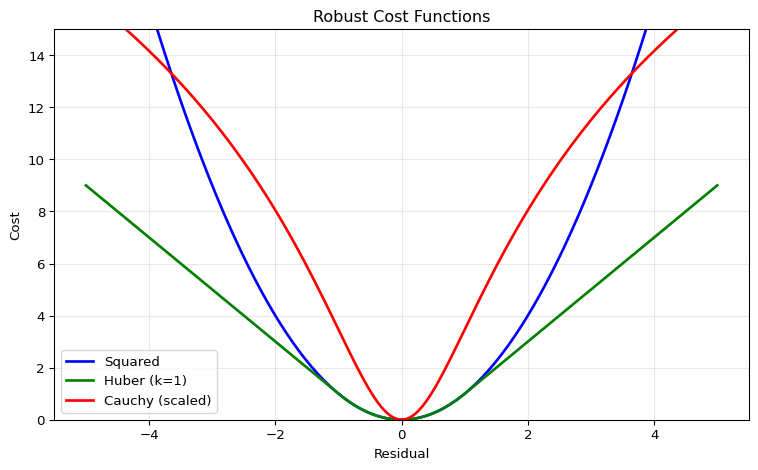

Robust Cost Functions

Loop closure detection can produce outliers (false matches).

Standard least squares is sensitive to outliers!

Solution: Use robust kernels

| Kernel | Formula | Effect |

|---|---|---|

| Squared | \(\rho(r) = r^2\) | Standard (sensitive) |

| Huber | \(\rho(r) = \begin{cases} r^2 & |r| \leq k \\ 2k|r| - k^2 & |r| > k \end{cases}\) | Linear growth for outliers |

| Cauchy | \(\rho(r) = \log(1 + r^2)\) | Bounded influence |

Robust Cost Visualization

Loop Closure Detection

We assumed loop closures are given. In practice, detecting them is challenging!

Approaches:

Scan-to-scan matching: Match current scan against all previous scans

- Expensive: \(O(n^2)\) comparisons

Scan-to-map matching: Match against accumulated map

Place recognition: Use descriptors to find candidate matches

- Bag-of-words, learning-based methods

Libraries for Pose Graph Optimization

In practice, use optimized libraries:

GTSAM (Georgia Tech Smoothing and Mapping)

- Factor graph formulation

- Incremental updates (iSAM2)

- Widely used in robotics research

g2o (General Graph Optimization)

- C++ library

- Used in ORB-SLAM, LSD-SLAM

Ceres Solver

- Google’s nonlinear least squares library

- Very flexible, automatic differentiation

Factor Graph View

GTSAM uses “factor graph” terminology:

Note

Variables are poses, factors are constraints (measurements). Same math, different visualization!

Connection to Your Assignment

Your scan matching assignment involves:

- Sequential scan matching → odometry edges

- Long-range scan matching → loop closure edges

- Match scan \(k\) against scans \(0, 1, \ldots, k-10\) (for example)

- If match quality is good, add loop closure edge

- Pose graph optimization → correct drift

- Build graph with all edges

- Optimize to find best poses

Summary

Problem: Odometry drift accumulates; loop closures provide constraints

Graph structure: Nodes = poses, edges = relative pose measurements

Formulation: Nonlinear least squares on SE(2) residuals

Key insight: Jacobian/Hessian is sparse → tractable for large graphs

Practice: Use robust kernels for outliers, libraries for efficiency

References

Grisetti, G., Kummerle, R., Stachniss, C., & Burgard, W. (2010). “A Tutorial on Graph-Based SLAM.” IEEE Intelligent Transportation Systems Magazine.

Dellaert, F., & Kaess, M. (2017). “Factor Graphs for Robot Perception.” Foundations and Trends in Robotics.

Carlone, L., et al. (2025). “Slam handbook: From localization and mapping to spatial intelligence.”.

GTSAM: https://gtsam.org

Pose Graph Optimization • ME 676 Home